How big of let down is it when you are reading a web page, find something interesting enough to click on and are subsequently dropped down the 404 Not Found hole?

Often the maintainer of a site does not even know what is broken – especially for content oriented sites which have a healthy amount of outbound links.

Much like broken and flaky tests, compiler warnings, test coverage, code quality and consistent configuration+tooling across promotion environments, the sooner you establish [Picard voice] "the line must be drawn here", the better off you are. Once you have a set standard of what is acceptable and good visiblity to what is crossing that line, you have a fighting chance of understanding where to invest to maintain that standard or improve it.

For a blog like this one, hyperlinks can break a few different ways. Internal links can be broken from the start due to unforced errors (i.e. - typos) or software upgrades. Links going out to other sites can drift into a broken state due to an external site making changes or being taken offline.

Muffet

I wanted to see how this site was doing and had recently stumbled on Muffet, a supa-fast, open source, Go-based broken link checker.

The first time I ran Muffet, I discovered an embarrassingly long list of broken links here.

Here are some choice examples from a trimmed down version of that first run:

$ muffet https://mattorb.com

96

https://mattorb.com/swift-2-to-5/amp/

97

404 https://mattorb.com/swift-2-to-5/swift%20half%20open%20range

103

https://mattorb.com/fuzzy-find-github-repository/

104

404 https://mattorb.com/fuzzy-find-github-repository/GitHubAPIv3%7CGitHubDeveloperGuide

105

404 https://mattorb.com/fuzzy-find-github-repository/github.com/shurcooL/githubv4

$

How to read this output: By default, Muffet only puts broken links in the output. Hierarchy is expressed through indention: so #97 above is a link that was walked while parsing the page at #96. The unindented number is a counter of links checked, and the indented numbers are HTTP return codes (404 = not found).

All of the link issues above were unforced errors as far as I can tell. Additionally, the stuff I trimmed out included errors for images that had gone missing and links to external sites that were no longer valid.

Awesome! We now have a way to assess the whole site for broken links. The problem is we just found a whole bunch of broken things all at once, which means a whole bunch of work to fix them.

Prefer small fixes right away

Next time a link breaks, I want to be fixing just that one thing and be done -- rather than looking at a large pile of issues that have accumulated over a longer period of time.

Ideally, I want broken links assessed:

- Automatically, before publishing new content – to catch unforced errors before they go live

- Automatically, on configuration changes and software upgrades – to catch unexpected interactions of new software and existing content

- Automatically, on a schedule – to catch drift in the health of links to external party sites

- On demand, to confirm I have fixed issues after making changes

Having a place to trigger the workload which executes a broken link checker manually or programmatically, record the results, and notify me when things break would hit all my needs.

After my other recent experiment with a Github Action, that seemed like a good candidate.

A Github Action for Muffet

Always Google first, to see if someone else has already done similar work.

I found an archived Github repo from peaceiris that had a Muffet Github action. I have no idea why he/she archived it, but it seems to work fine, so I forked it to keep a copy.

To use that action from our project [checked in to a Github repo], I added a workflow at .github/workflows/checklinks.yml :

name: checklinks

on: [push]

jobs:

build:

runs-on: ubuntu-latest

steps:

- name: Check links on site

uses: mattorb/actions-muffet@v1.3.1

with:

args: >

--timeout 20

https://mattorb.com

This sets up triggering Muffet to check for broken links on every push to git master. It builds the needed action via a Docker build of the Github repo mattorb/actions-muffet, tag 1.3.1.

As noted earlier, sometimes external links go bad due to changes outside our awareness, so below we add a schedule stanza to trigger this check regularly as well:

name: checklinks

on:

push:

branches:

- master

schedule:

- cron: '0 13 * * 6'

jobs:

build:

runs-on: ubuntu-latest

steps:

- name: Check links on site

uses: mattorb/actions-muffet@v1.3.1

with:

args: >

--timeout 20

https://mattorb.com

Now, in addition to executing on pushes to master, that cron schedule sets this check to happen automatically once a week. ('0 13 * * 6' == 1pm on Saturdays)

When it fails, Github sends you an e-mail. (default settings)

Also, this handy badge can be placed at the top of README.md in the git repo, or on the site itself:

That badge is live, so hopefully it reads 'passing' when you are reading this article! For how to make one, see the Github docs.

At this point, we have a Github Action in place that will be kicked off for a few scenarios:

- Manually triggered via the Github web UI

- Automatically triggered via the a push the git repo master

- Automatically triggered once a week

Going Further

One of those GitHub Action triggers that I'm particularly interested in, since my blog workflow has not moved over to a static generation approach yet: repository_dispatch. It is still in developer preview but offers a way to trigger a Github Action workflow based on an external events.

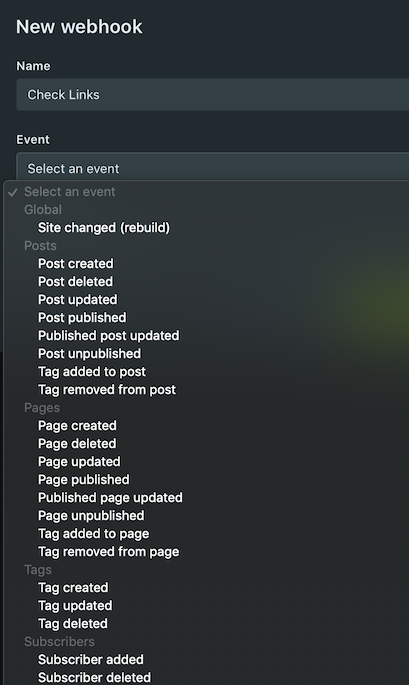

Ghost has webhooks that can be triggered for various types of modifications:

Tying one or more of those to triggering the Github Action via a repository_dispatch event will require building something to either receive the Ghost JSON webhook Payload and post the Github expected JSON payload, or extending Ghost itself with a custom webhook integration for repository dispatch – a small future project.

UPDATE: here is a quick stab at that in Go. I point Ghost webhooks at it for the 'New post published' and 'Published post updated' events to trigger broken link checking on those two events. It is a bit naive in that it scans the whole site every time a new post is published.